News

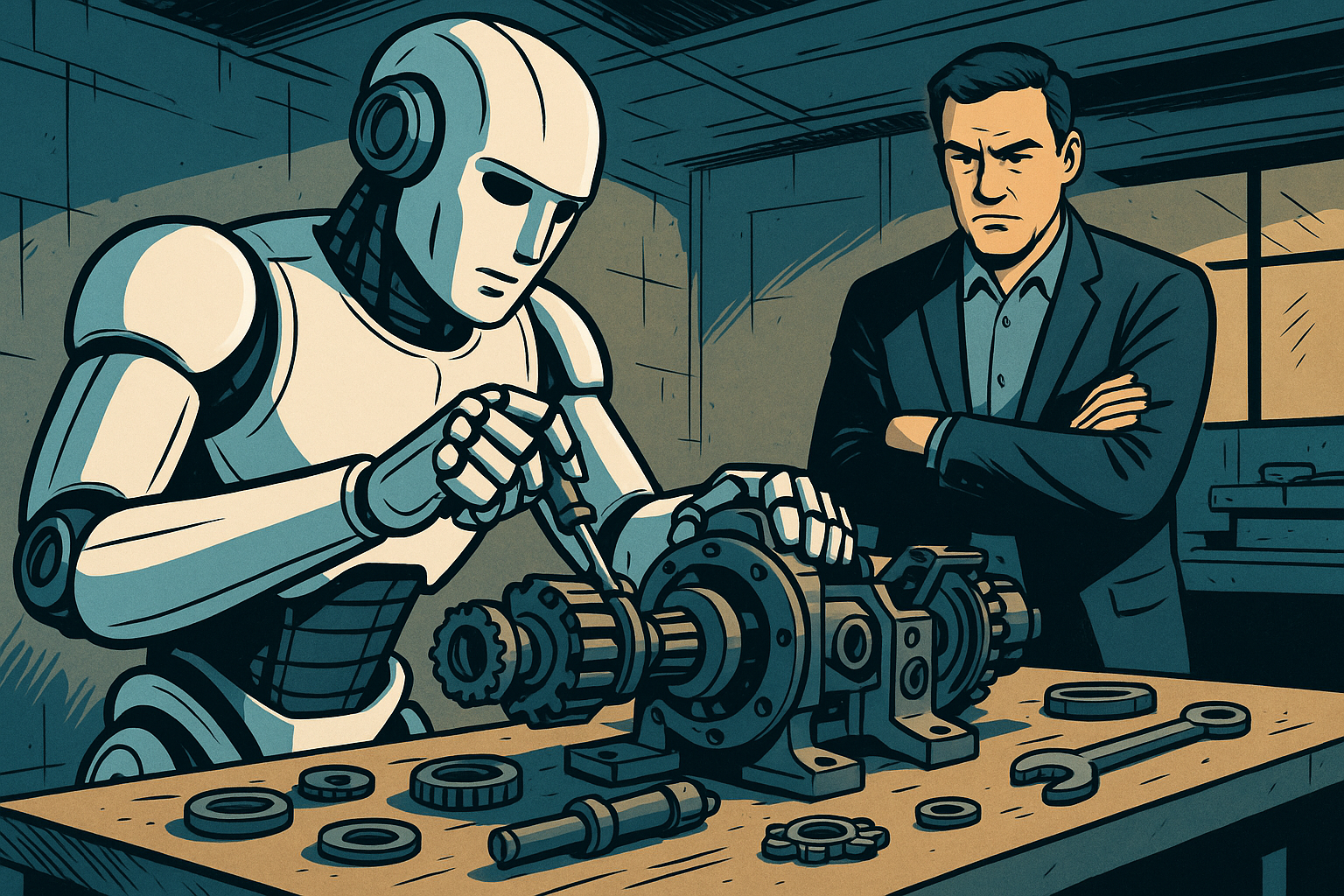

Agentic AI in Southeast Asia: Striking the Balance Between Autonomy and Human Oversight

- Share

- Tweet /data/web/virtuals/375883/virtual/www/domains/spaisee.com/wp-content/plugins/mvp-social-buttons/mvp-social-buttons.php on line 63

https://spaisee.com/wp-content/uploads/2025/09/oversight-1000x600.png&description=Agentic AI in Southeast Asia: Striking the Balance Between Autonomy and Human Oversight', 'pinterestShare', 'width=750,height=350'); return false;" title="Pin This Post">

A Technological Frontier with Human at the Helm

Agentic AI—systems that act, learn, and strategize autonomously—are generating buzz as the next frontier of artificial intelligence. In Southeast Asia, this emerging wave carries the promise of transformative economic gains, but also the sobering reminder that human oversight remains essential. Capgemini’s latest research places this tension squarely at the center of the conversation.

The Promise Meets the Reality

Capgemini Research Institute estimates agentic AI could unlock a staggering $450 billion in economic value by 2028. Yet, adoption remains cautious. Only 2% of organizations have scaled deployment, with most still navigating pilot phases or early planning. This cautious approach highlights a broader dynamic—enormous potential restrained by the need for trust, infrastructure, and clarity on outcomes.

What Defines Agentic AI?

Jason Hardy, Chief Technology Officer for AI at Hitachi Vantara, explains that agentic AI goes beyond the passive responsiveness of generative models. These systems operate more like a coordinated team of domain experts. They act proactively, learn from experience, strategize in real time, and execute tasks toward defined objectives in dynamic environments. The leap from generating text to driving real-world results marks a fundamental shift in how enterprises can leverage artificial intelligence.

Early Use Cases Show Promise

Initial applications of agentic AI in Southeast Asia are already delivering concrete results, particularly in IT operations and cybersecurity. In IT, agentic systems are automating essential tasks such as data classification, storage optimization, compliance reporting, and predictive maintenance. In cybersecurity, they’re proving invaluable by identifying anomalies, isolating threats, and initiating fail-safes like backup recovery—often in real time. These outcomes highlight the technology’s ability to elevate both routine and high-stakes workflows across industries.

Laying the Groundwork for Adoption

Hardy emphasizes that successful adoption depends on more than enthusiasm. Companies must first ensure their data is well-classified, secure, and governed. Beyond data hygiene, core technologies like multi-agent orchestration, persistent memory, and real-time resource allocation must be in place to make agentic AI scalable. For most businesses, a strategic rollout begins with IT operations, where the risks are manageable and the return on investment can be proven quickly before expanding into more customer-facing or supply chain systems.

Economic and Workforce Impacts

The broader economic implications for Southeast Asia are profound. IDC projects that AI—including generative AI—could contribute as much as $120 billion to the GDP of ASEAN-6 countries by 2027. Hardy believes that agentic AI, in particular, may accelerate that trajectory even faster than current models suggest. However, this growth won’t come without workforce disruption. In Indonesia, more than 57% of job roles may be altered or displaced by AI. The World Economic Forum predicts AI could create 11 million new jobs in Southeast Asia by 2030 while displacing 9 million, with disproportionate effects on women and younger workers.

Companies are already responding. Microsoft has pledged $1.7 billion toward AI infrastructure and education in Indonesia and launched significant reskilling programs across Malaysia and other regional markets. These investments are designed not just to build technological capacity but also to soften the social and economic friction that rapid AI deployment inevitably brings.

The Human Imperative

A consistent theme across Capgemini’s findings is the irreplaceable role of human oversight. Nearly three-quarters of executives believe the cost of human involvement in AI workflows is justified, and nine in ten say oversight is either cost-neutral or beneficial. Rather than eliminating human jobs, agentic AI changes the nature of work itself. The human role shifts from task execution to strategy, governance, and coordination—roles that require new skills in auditing, ethics, and operational oversight.

According to Hardy, the future of agentic AI hinges not just on its technical capacity, but on how organizations prepare their leaders, HR teams, and governance bodies to manage it. The era of “set-and-forget” AI is over; today’s agentic systems demand continuous human engagement, not just in development but in day-to-day operations and decision-making.

A Vision Forward

To successfully navigate the era of agentic AI, Southeast Asia must prioritize foundational readiness. That means building strong data and orchestration infrastructure, deploying AI strategically in low-risk environments like IT, and investing heavily in workforce development. But it also means constructing a culture of trust and transparency around AI. Governance frameworks will need to be robust and enforceable, balancing innovation with accountability.

Autonomy with Accountability

Agentic AI in Southeast Asia represents more than a technological leap—it is a social, economic, and ethical inflection point. The region stands poised to harness significant value from AI-driven autonomy, but only if it keeps human agency at the core of that transformation. The real story isn’t about machines acting independently—it’s about how we guide those machines to act wisely, responsibly, and in harmony with the needs of the societies they’re meant to serve.

News

Grokopedia: Elon Musk’s AI Encyclopedia Challenges Wikipedia’s Throne

In late October, Elon Musk’s xAI quietly flipped the switch on what might be its most ambitious project yet — an AI-written encyclopedia called Grokipedia. Billed as a “smarter, less biased” alternative to Wikipedia, it launched with nearly 900,000 articles generated by the same AI model that powers Musk’s chatbot, Grok.

But just a day in, Grokipedia is already stirring controversy — not for its scale, but for what’s missing: citations, community editing, and transparency. The promise of a perfectly factual AI encyclopedia sounds futuristic. The reality looks much more complicated.

From Grok to Grokipedia: A New Kind of Knowledge Engine

At its core, Grokipedia is an AI-driven encyclopedia built by xAI, Musk’s research company now tightly integrated with X.com. Its purpose? To use AI to “rebuild the world’s knowledge base” with cleaner data and fewer ideological biases.

Unlike Wikipedia, where every article is collaboratively edited by humans, Grokipedia’s content is written by AI — Grok, specifically. Users can’t edit entries directly. Instead, they can submit correction forms, which are supposedly reviewed by the xAI team.

Within 48 hours of launch, the site claimed 885,000 entries spanning science, politics, and pop culture. Musk called it “a massive improvement over Wikipedia,” suggesting that human editors too often inject bias.

The Big Difference: No Editors, Just Algorithms

If Wikipedia is a “crowdsourced truth,” Grokipedia is an algorithmic truth experiment. The difference is stark:

- Wikipedia has visible revision histories, talk pages, and strict sourcing rules.

- Grokipedia offers AI-written pages with minimal citations and no public edit trail.

On a test comparison, Grokipedia’s entry on the Chola Dynasty contained just three sources — versus over 100 on Wikipedia. Some political entries mirrored phrasing used by X influencers, raising concerns about subtle ideological leanings.

xAI claims the platform will get “smarter over time,” as Grok learns from user feedback and web data. But so far, its process for verification or bias correction remains completely opaque.

Open Source or Open Question?

Musk has said Grokipedia will be “fully open source.” Yet, as of publication, no public repository or backend code has been released. Most of the content appears to be derived from Wikipedia’s CC BY-SA 4.0 license, with small AI edits layered on top.

This raises a key issue: if Grokipedia reuses Wikipedia’s text but removes human verification, is it really a competitor — or just a remix?

Wikimedia Foundation’s statement pulled no punches:

“Neutrality requires transparency, not automation.”

The Vision — and the Risk

Grokipedia fits neatly into Musk’s broader AI ecosystem strategy. By linking Grok, X, and xAI, Musk is building a self-sustaining data loop — one where AI tools generate, distribute, and learn from their own content.

That’s powerful — but also risky. Without clear human oversight, AI-generated reference material can reinforce its own mistakes. One factual error replicated across 900,000 entries doesn’t create knowledge; it creates illusion.

Still, Musk’s team insists that Grokipedia’s long-term mission is accuracy. Future versions, they say, will integrate live data from trusted sources and allow community fact-checking through X accounts.

For now, it remains a closed system, promising openness later.

A Future Encyclopedia or a Mirage of Truth?

Grokipedia’s arrival feels inevitable — the natural next step in a world where generative AI writes headlines, code, and essays. But encyclopedic truth isn’t just about writing; it’s about verification, accountability, and trust.

As one early reviewer on X put it:

“It’s like Wikipedia written by ChatGPT — confident, clean, and not always correct.”

If Musk can solve those three things — trust, transparency, and verification — Grokipedia could become a defining reference for the AI era.

If not, it risks becoming exactly what it set out to replace: a knowledge system where bias hides in plain sight.

Grokipedia is live now at grokipedia.com, with full integration expected in future versions of Grok and X.com.

News

ChatGPT Atlas Review: OpenAI’s New AI Browser Feels Like Research With a Co-Pilot

I’ve been testing ChatGPT Atlas — OpenAI’s brand-new AI browser — for about four hours since its release, and in my opinion, it’s one of the most intriguing tools the company has shipped in years. Instead of just loading pages, Atlas thinks about them. It reads, summarizes, and connects what you’re looking at, almost like having a reasoning engine built into every tab.

First Impressions

After installing Atlas, I expected another Chrome-style browser with a ChatGPT plug-in. What I found was something closer to a full AI workspace. Each tab carries its own ChatGPT context, capable of reading and summarizing web content instantly.

In my short time testing, I noticed how natural it feels to ask questions right inside a page. While reading a technical paper, I typed, “Explain this in plain English,” and Atlas responded in a sidebar with a clear summary and citations. Even in just a few hours, that feature changed how I browse.

What also stood out to me is how Atlas remembers. When I opened a new tab on the same topic, it automatically referenced what I had read earlier. It feels less like jumping between pages and more like continuing a conversation.

Key Features That Impressed Me

1. Inline Queries That Make Sense

Highlight text on any webpage, ask a question, and Atlas gives an instant, sourced explanation. In my opinion, this single feature turns the browser into a genuine research companion.

2. “Action Mode”

Atlas can fill forms, pull structured data, or run quick code snippets. I tried it on a couple of booking pages and spreadsheets — it worked, though slower than expected. It’s powerful, but you’ll still want to double-check what it does.

3. Visual Insights

Select a table or dataset, and Atlas can generate quick visual summaries like charts or sentiment heatmaps. I tested it on an economics article; the graph it generated was simple but accurate enough to use.

Early Friction Points

Based on my short testing window, Atlas isn’t flawless. When summarizing long PDFs, it sometimes mixes headings or ignores footnotes. It also generated a few off-target details when I gave vague prompts. Memory occasionally resets, breaking the “continuous reasoning” flow.

Performance is decent, though heavy AI summarization noticeably spikes CPU usage. On my MacBook Air, multiple “analyze” tabs made the fan run nonstop.

Privacy and Security Notes

OpenAI says browsing data stays local and encrypted unless you explicitly opt in for cloud sync. From what I saw in settings, each tab can be memory-isolated, which helps. Still, since Atlas effectively reads every page, I’d avoid testing it on confidential or login-protected material for now.

How It Stacks Up

I’ve used Arc Search and Perplexity Desktop, and Atlas already feels more cohesive. Arc helps you find; Perplexity helps you read; Atlas does both — and reasons about what it finds.

If I had to summarize the difference after a few hours: Arc shows you results, Perplexity explains them, but Atlas understands context across pages.

Who It’s For

From what I’ve seen so far:

- Researchers and students will benefit most from live summarization and citation support.

- Writers and analysts can use it as an on-page note taker.

- Developers can run snippets and query APIs directly inside web tools.

- Casual users might just appreciate how it simplifies everyday reading.

My Verdict After Four Hours

Even after only a few hours, I can see where this is going. Atlas feels like more than a browser — it’s a reasoning layer for the web.

In my opinion, OpenAI isn’t trying to reinvent Chrome; it’s trying to reinvent how we think while browsing. There are still rough edges, bugs, and slowdowns, but the core idea — browsing that reasons with you — feels like a glimpse of the next computing shift.

If you get access, I’d suggest experimenting for a few hours as I did. Atlas doesn’t just show you the internet; it helps you make sense of it.

AI Model

What You Can Do With Sora 2 — Your Personal Video‑Dream Factory

Picture this: you, starring in a cinematic short, starring in the world you imagine, all from a simple photo and a line of text. That’s the promise of Sora 2 — the next‑generation video‑generation engine from OpenAI that’s now empowering everyday users to bring fantasies to vivid life.

The Vision: You Can Be the Star

At its heart, Sora 2 gives everyone the chance to generate an original video of themselves — and by “themselves” we mean you can appear, or your likeness can appear, in scenes you invent. Want to see yourself dancing on the moon? Or riding a dragon above Tokyo? Or being the hero of a story that has never been told? Sora 2 says yes.

Sora 2 is more physically accurate, realistic and controllable than prior systems. It supports synchronized dialogue and sound effects. The message is clear: you are no longer just a viewer of video — you can be its star, its director, its hero.

All those little fantasies you’ve had — the ones you never acted on — can now play out on screen. Want a short film of yourself as an Olympic gymnast doing a triple axle with a cat on your head? That’s a real example from OpenAI. In other words: if you can describe it, you can see it.

What People Are Already Doing with Sora (and Sora 2)

While Sora 2 is very new, early users have begun to experiment in interesting ways. The app allows uploading photos or entering a prompt and producing short videos that remix or reinterpret your image in imaginative settings.

Some of the more popular uses include:

- People inserting themselves into wild, cinematic backgrounds — such as “me on a dragon in a fantasy cityscape”.

- Short, shareable clips that feel like magic: “me walking through Tokyo in lantern light”, or “me surfing a giant wave under neon city lights”.

These aren’t just fantasy scenarios — they are now actual demos being created and shared by real users. And while specific numbers on viral clips aren’t available yet, the sheer variety and creativity on display already proves the tool’s appeal.

Adoption & Download Figures

Here are the key figures so far:

- Sora exceeded one million downloads in less than five days after release.

- It reached No. 1 on Apple’s App Store during its launch week.

- While OpenAI hasn’t shared exact user numbers, momentum is clearly building fast, especially among creators and digital storytellers.

Why You Should Download Sora 2 (and Generate)

If you’re on the fence: here’s why you should give it a go.

First: You don’t need a high‑end video camera, a full film crew, or months of editing. All you typically need is a photo of yourself (or at least a clear face image) and a text prompt describing what you want. Upload your photo or choose to appear, write a one‑sentence (or more) description of your scene, and the system generates a short video.

Second: The output can be astonishing. You could end up with a short cinematic clip of yourself, with realistic motion, sound, voice and environment. The transformation from your still photo + prompt, to you appearing in a short video scenario, is magical and empowering.

Third: This is your chance to experiment. The barrier to entry is low. Even if the result isn’t “perfect Hollywood”, you’ll have something you made. You’ll star in a moment of your own creation. That alone is worth a shot.

How to Get Started: Basics of Video Generation

Here’s a step‑by‑step of what getting started looks like:

- Download the Sora app (currently in iOS invite‑only regions such as U.S. and Canada) and sign in with your OpenAI/ChatGPT credentials.

- Choose to upload a photo of yourself (clear face, good lighting helps).

- Write a text prompt describing the scene you want. For example: “Me in a futuristic city at dusk flying on a hoverboard above neon lights”.

- Optionally specify style (cinematic, anime, photorealistic) if the interface allows.

- Hit generate and wait for the clip to render (short durations: currently up to ~15 seconds for free users, up to 25 for Pro).

- Review the video, share it, or remix it if you like.

Repeat: Upload your photo + write your prompt → get your video. It’s that simple.

And again: The result can be you, living your fantasy, starring in the video you’ve always imagined. You are not just a bystander — you are the protagonist.

What the Result Can Be: Your Fantasy, Realised

Imagine this: you open the app, upload your photo, type “Me stepping onto the red carpet at a global awards show, cameras flashing, lights swirling, I smile and hold the trophy aloft”. A few minutes later you have a video where you appear in that scene. You could imagine yourself “On the moon in astronaut gear planting a flag that says ‘I Made It’” or “Riding a black stallion across a desert at dawn with dramatic skies”. These are not just possibilities — they’re actual use‑cases people are exploring with Sora 2.

Your fantasies — yes, even the ones you shelved because you thought they were too far‑fetched — can now live as a short cinematic moment. Because of the ease, you don’t need to wait. You don’t need a director. You don’t need a production crew. The tools are in your hands.

Final Encouragement: Go Create

If you’ve ever worried “I’d love to make a film about myself” or “I wish I could see myself in a wild scene”, now is the time. Download the Sora 2‑powered app, pick a photo of yourself, type your prompt, and hit generate. You’ll get a short video of yourself in your made‑up world. Use it for fun, for social sharing, for a creative experiment. Let your imagination run wild.

Don’t wait for “perfect”. The first one you make might be rough around the edges — but that’s okay. Creating is more important. Even a 10‑15 second clip starring you is a step into a new realm. Accept that you’re the star of your own story — and let Sora 2 bring it to life.

Go ahead. Upload that photo. Write that sentence. See yourself in a scene you’ve always dreamed of.

-

AI Model4 weeks ago

AI Model4 weeks agoHow to Use Sora 2: The Complete Guide to Text‑to‑Video Magic

-

AI Model3 months ago

AI Model3 months agoTutorial: How to Enable and Use ChatGPT’s New Agent Functionality and Create Reusable Prompts

-

AI Model4 months ago

AI Model4 months agoComplete Guide to AI Image Generation Using DALL·E 3

-

AI Model4 months ago

AI Model4 months agoMastering Visual Storytelling with DALL·E 3: A Professional Guide to Advanced Image Generation

-

News1 month ago

News1 month agoOpenAI’s Bold Bet: A TikTok‑Style App with Sora 2 at Its Core

-

News4 weeks ago

News4 weeks agoGoogle’s CodeMender: The AI Agent That Writes Its Own Security Patches

-

News4 months ago

News4 months agoAnthropic Tightens Claude Code Usage Limits Without Warning

-

Tutorial4 weeks ago

Tutorial4 weeks agoUsing Nano Banana: Step by Step